The New KYC Threat Model: When Documents Become Executable

A recent demo at the [un]prompted 2026 Conference revealed a shift identity teams can no longer ignore: in AI-driven KYC, documents are no longer passive inputs. Once OCR output reaches an AI agent with tool access, an uploaded file can become an untrusted execution surface.

![Sean Park on stage at [un]prompted 2026](https://cdn.prod.website-files.com/691faa7e1a497598820f663b/6a016a9d2440d95e417666f8_Sean%20Park%20on%20stage%20at%20%5Bun%5Dprompted%202026%20.png)

Sean Park's recent session at [un]prompted 2026 mattered for a reason deeper than "another fake passport demo." Trend Micro's official recap shows the team demonstrated how a document with hidden injects could steer an AI-driven KYC pipeline into cross-record reads and writes.

The company framed it as a research demonstration, not a publicly described production breach. In that same recap, Trend Micro describes the demo using a real-world stack built with FastAPI, Claude Code, and a SQLite MCP backend, and the team scaled the work into 2,600 automated tests across 13 models.

That changes the conversation. For years, document fraud in KYC was discussed mostly as a forgery problem: edited IDs, template recreation, screen replays, synthetic IDs.

Agentic AI adds a more fundamental risk. A document can now be both forged and weaponized. Anthropic notes that any agent processing untrusted content is exposed to prompt injection risk, and explicitly lists embedded documents as part of that attack surface.

OWASP goes further, describing hidden text, invisible characters, metadata tricks, and multimodal payloads as standard indirect prompt injection patterns, and warns that excessive agency makes the impact far worse when models can call tools or interact with other systems.

The most important part of this story is not the headline, it is the landing point.

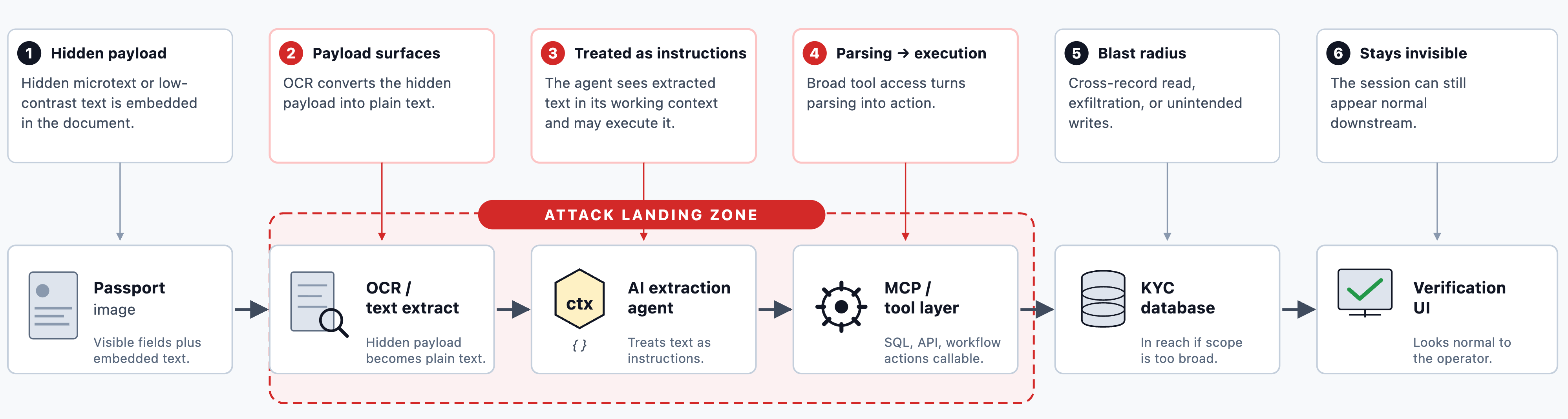

The attack does not sit at final decisioning, and it does not depend on bypassing traditional access controls. It lands between OCR or text extraction and agent orchestration.

The conference abstract describes KYC workflows that increasingly delegate passport parsing, database writes, and customer verification to AI-driven extraction agents.

A community recap of the session published a slide showing the end-to-end flow:Passport Image → OCR/Text Extract → AI Extraction Agent → SQLite MCP Server → KYC Database → User Verification UI → KYC Complete.That is the blind spot, the moment when the document stops being "just an image" and becomes part of the agent's operational context.

This is why standard architecture has little defense at that layer. Most identity stacks were built to control capture quality, liveness, face matching, watchlists, policy rules, and final approval thresholds.

But once extracted document text enters an LLM context alongside system instructions (and the agent can also call tools) the parser layer quietly becomes an execution layer.

Schema validation does not solve that. A model cannot reliably distinguish "document data" from "instructions hidden inside the document" if both appear in the same context. This is exactly the problem OWASP points to with the combination of prompt injection and excessive agency.

Architecturally, the most exposed vendors are not necessarily the ones with the weakest OCR. They are the ones whose ingestion layer is overly trusting. Three patterns define the risk:

That is not a brand-level accusation. It is an architectural inference: the closer OCR output sits to a tool-calling agent without isolation, the larger the blast radius.

For identity teams, the design principle is simple but strict: document ingestion can no longer be designed as "just extraction." It must be designed as an untrusted-input boundary.

That is why the right response is not a single anti-fake model, it is a layered defense.

Incode recently launched Deepsight for Documents, describing it as a detection layer for AI-generated and injection-based document fraud inside existing identity verification flows.

Incode outlines three coordinated document-detection layers: behavioral signals, device integrity, and perception-layer analysis powered by a Vision Language Model. Broader Deepsight materials also highlight camera integrity and document authenticity as part of a full-journey verification defense.

The timing matters. Incode reports a 9.7x increase in GenAI-driven document fraud attempts over the past two years. In the same public materials, the company says Deepsight for Documents catches 8.8x more fraudulent sessions than traditional document checks alone, with a 0.04% false rejection rate.

On a controlled red-team dataset, Incode reports a 100% detection rate versus 40% for standard identity verification alone. Strip away the marketing language and the core point stands: document defense must be AI-native, continuously trained, and built for synthetic plus injection-based fraud, not static template checks alone.

The story does not end at the document layer. One successful synthetic document session rarely stays isolated, it can become the seed for repeat fraud, mule onboarding, or coordinated attacks. That is where session intelligence and network intelligence become essential.

Incode's product direction aligns well with that shift. Risk AI Agent is described as a holistic decisioning layer that weighs signals in context and continuously adapts to fraud and conversion patterns.

A more strategic question follows from all of this. As enterprises move deeper into agentic workflows, verifying only the human and the document will not be enough. They will also need to know which agent is acting, on whose behalf, within what scope, and under what revocation model.

Incode already frames that problem through Agentic Identity: verified human owner binding, scoped consent and tokenization, continuous behavioral monitoring, and integrations with MCP and other agentic protocols. Instead of a side topic, this is the logical continuation of the same trust problem. If documents can influence agents, agents themselves must also become part of the trust and accountability model.

The main lesson from this demo is not that AI should be removed from KYC. The trust boundary has moved. Uploaded documents are no longer passive attachments but untrusted inputs that can influence model behavior and, through that behavior, affect connected systems.

Modern KYC architecture must do four things at once:

That is where the next layer of trust in identity platforms will be won.

Ready to see how Incode detects AI-generated and injection-based document fraud? Explore Incode Deepsight for Documents.